Amandine Le Pape, COO at Element, explains how Element is funding moderation tooling and development for all of Matrix.

Moderation of online information is topical for two completely unrelated reasons:

- Moderation is proving critical in the context of disinformation concerning the Russian invasion of Ukraine.

- Separately, moderation is also proving one of the biggest obstacles to the Digital Markets Act landing in Europe.

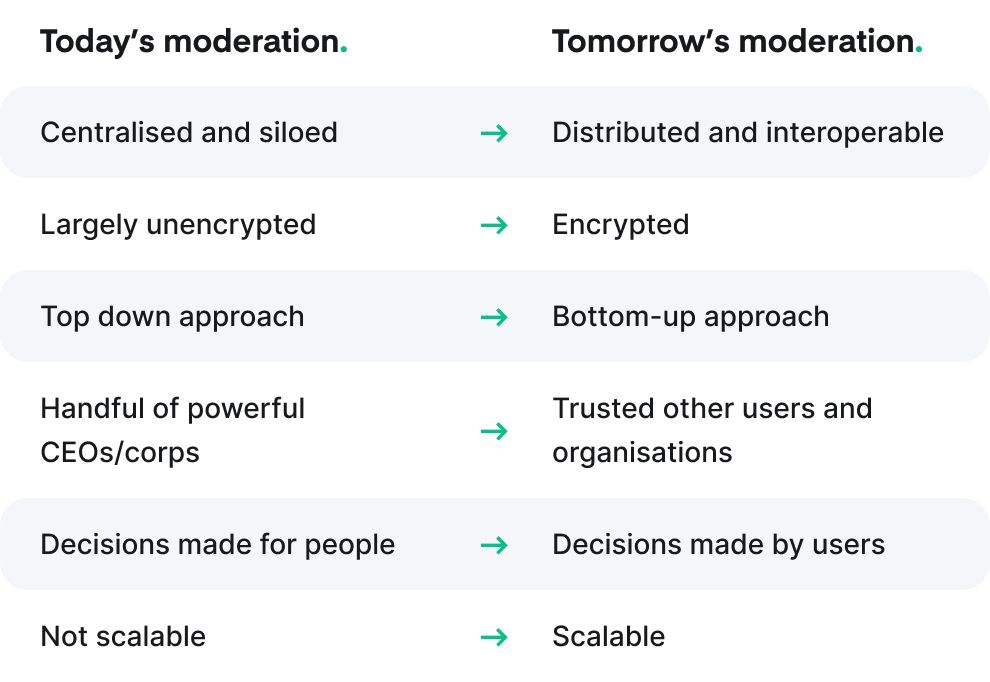

Let’s face it, the traditional approach to moderation is fundamentally broken. It’s neither scalable nor compatible with the idea of interoperable communication. Something has to change.

Today’s communications and social media are completely centralised and rarely end-to-end encrypted, which gives those providers complete control over what happens on their service (impacting billions of users).

Big Tech takes a top down approach to moderation. It hires thousands of moderators who are locked away in a dungeon to look at content and remove material which doesn’t fit the provider’s particular beliefs or terms and conditions. These providers also have the authority to make calls on whoever can or cannot use their platform to communicate publicly. It’s not a huge problem while you agree with the provider’s political position, but the world (and commercial incentives) is always changing - meaning it might not always be the case.

Meta, Twitter and a small handful of other tech companies potentially have as much power as the world’s politicians (if not more!), but their CEOs are far from democratically elected officials. Profit-driven, US-based Big Tech firms have become the filter through which much of the world's information now percolates.

The world is complex and nuanced. Decisions on what is right or wrong can be obvious, but more often there is an awful lot of grey out there; from one person’s freedom fighter being another person’s terrorist, to opposing points of view which don’t need to be presided over by a single entity.

A better world is a place where users can tune what they see to align with their values; putting them in control rather than being subjected to a single provider’s algorithm. This offers meaningful choice and real transparency. Enabling users to dial up/down the volume of certain content based on their perceived value or the trust users have in them would make disinformation bots less influential over time - and eventually the disinformation would be lost in its own echo chamber. People can even be encouraged to see whether their beliefs are aligned with the majority or the minority.

The solution for Matrix

An evolving moderation system is an existential requirement for Matrix as an open network for communication available to everyone and anyone. Without appropriate moderation tooling, the network would probably be overwhelmed by abuse, spam and obnoxiousness. This is precisely why Matrix had no choice but to tackle the question “how do you moderate an open network?” pretty rapidly. And the good news is that it can be applied to other networks too.

An open, decentralised and end-to-end encrypted network has no single point of control, and it can’t mine the content of messages. So Matrix has no other option but to give users and administrators the tools to moderate the network. These tools include reporting content, ignoring/banning a user, blocking traffic from a given user, or traffic matching a given pattern.

Matrix also needs tools to fit its decentralised nature. Access Control Lists (ACLs) can be used by moderators to prevent given servers from participating in conversations, and these ACLs can be shared with the rest of the network, avoiding every moderator having to reinvent these rules independently. If a trusted user or server declares a given server is propagating illegal content, other users/servers can opt to benefit from this information immediately and choose to adopt this rule.

Matrix takes moderation tooling to the next level because:

- This method of moderation scales at the same rate as the number of users grows

- A server admin can build on the experience of trusted servers/admins

- Users and admins can choose who to trust and block as well as having the option to change their minds anytime

Conventional moderation is outmoded because:

- Users have no choice but to believe that <insert BigTech> is right

- There is no transparency: users are unable to see what is marked as bad nor why it was

- The size of the provider's moderation team needs to grow with the network

So far a lot of these tools have been deployed manually in the Matrix network. The next step is to package them up and make them available ‘out of the box’. This will not only make them available to all server admins and moderators, but also improve automation (e.g. automatically spot patterns and block them).

The idea is to allow server admins, community administrators, and even users(!), to both publish their own reputation lists of servers and users, and to ‘tune’ the level of trust and interest they have in them.

Now add in the ability to subscribe to others’ lists, and republish one's very own personal blend of what they consider quality content - a curated moderation playlist if you like - and you've built an ecosystem of reputation data which allows different parties to work together on mitigating abuse, and creates a completely new economy of reputation-based ‘decentralised’ moderation.

This also means interoperable communication is good for victims too: they can report abuse once, and it will propagate through the network automatically to anyone who agrees it is abuse (rather than having to send reports to every single party).

So how would the Russian disinformation campaign unfold in a fully operational decentralised reputation world? Users would subscribe to trusted fact checkers’ reputation lists, which would decrease the rating of the bots and the fake content, leaving the disinformation alone in an echo chamber without an audience. The bots might try to publish their own reputation lists… but these in turn would be flagged by fact checkers or other trusted parties. Would anyone have to play God on what is true or false? No. Would anyone be the sole decision maker on whether a particular news source should be censored? No. Every server admin, every community admin, every user could make their own choice to listen to it or not.

So, is it possible to moderate an open network?

Yes, and doing this will radically improve the future of online communication! This kind of moderation also helps pave the way for interoperable communication, which brings us back to the Digital Markets Act; a legislative proposal currently under consideration by the European institutions. The act aims to identify the gatekeepers in tech and ensures they enable competition choice for the user in the marketplace. However, despite the current beliefs, by mandating some levels of interoperability and opening up digital communication, the DMA could unlock safer and scalable moderation for everyone.

Interoperable communication means there’s no choice but to build scalable, crowdsourced moderation tools - solving the disinformation issue and giving users control over what they see or hear.

Sometimes taking the harder path is the only way to grow and be free. Today we’ve hit that point. It is time for users to get their freedom back and for regulators and tech companies to encourage a marketplace where customers are free to change suppliers without having to leave their relationships and memories behind. Otherwise we will just continue falling into some Orwellian dystopia, and I doubt anyone is hoping for that.